What follows is an introduction to well-architected input design — the principles, the vocabulary, and the structure that holds up once a game grows past the tutorial stage.

If you’ve ever followed a Unity tutorial, you’ve probably written something like this:

void Update()

{

if (Input.GetKeyDown(KeyCode.Space))

{

transform.position += Vector3.up * 5f;

}

}Press space, the cube goes up. The first time it works, it feels a little bit like magic. You’ve made a thing react to a button — that’s a real milestone, and there’s nothing wrong with celebrating it.

But the correct reaction to this code, once the celebration wears off, is “Sir, this is disgusting.”

I’m only half-joking. There are honestly more than a dozen things wrong with this seven-line snippet, and we’ll get to most of them by the end of this post. Let’s start with the most glaring one — the one that, once you see it, will already start changing how you write input code. Don’t worry if any of the terms feel new; I’ll explain each one as we go.

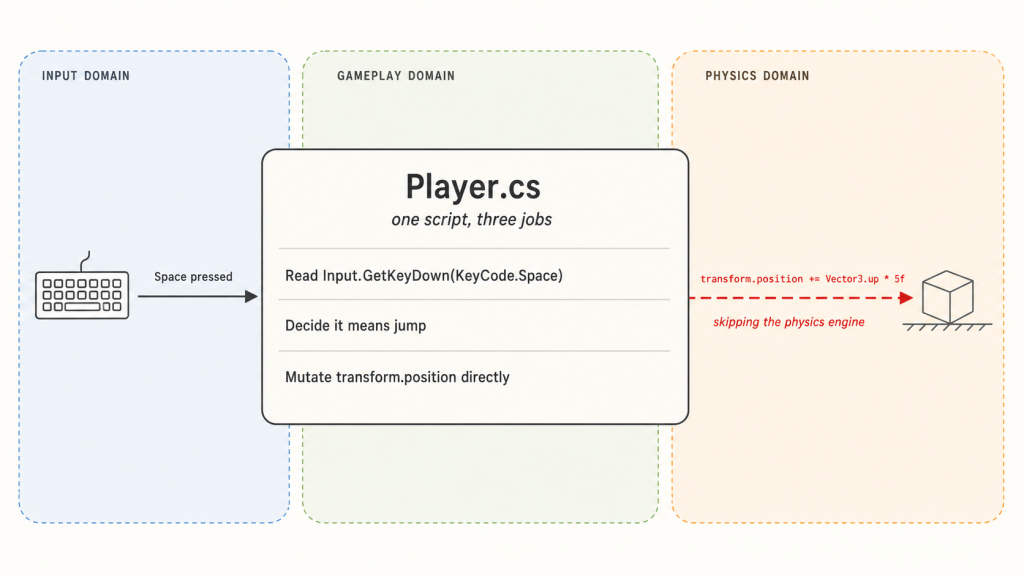

One script, three jobs

Look at the last line: transform.position += Vector3.up * 5f. That isn’t input code — it’s physics code, sitting in the wrong file. The script was supposed to be listening for a keypress. Somewhere along the way, it also decided to override gravity, ignore collisions, move the object instantly, and overrule anything else in the scene that might have an opinion about where this object is allowed to be. None of those decisions are input’s to make.

It feels fine for the first fifteen minutes because the scene is empty — no ceiling to clip through, no “frozen” status effect to honor, no cutscene that needs to take control. But the moment any of those things exist, the rule for how the object moves turns out to be scattered across every script that listens for a keypress. If you want to add a single “can’t move while frozen” check, you’ll have to find and patch each one. You will miss one. Everyone misses one.

Programmers have a name for the principle being broken here: separation of concerns. It’s a fancy phrase for a simple idea — each part of your code should have one clear job, and shouldn’t reach into someone else’s job.

The script we started with collapses two genuinely distinct jobs into one blob. The first is figuring out what the player wants — translating the noisy stream of hardware events (key presses, mouse clicks, gamepad buttons, touchscreen taps) into clean intent. The second is figuring out what to do about it — checking whether the action is currently legal, how it interacts with the rest of the game, and what it ultimately changes in the world. “The player wants to jump” is the natural boundary between the two. Whether jumping is currently allowed, how high it goes, whether a ceiling stops it — those questions all live on the far side of that boundary. Everything upstream is one system; everything downstream is another. The rest of this post is, more or less, an extended argument for treating that boundary seriously — first by recognizing it exists, then by building each side of it properly.

Let’s start fixing it. The first move is to pull the two halves apart in the most literal way possible.

Pulling the halves apart

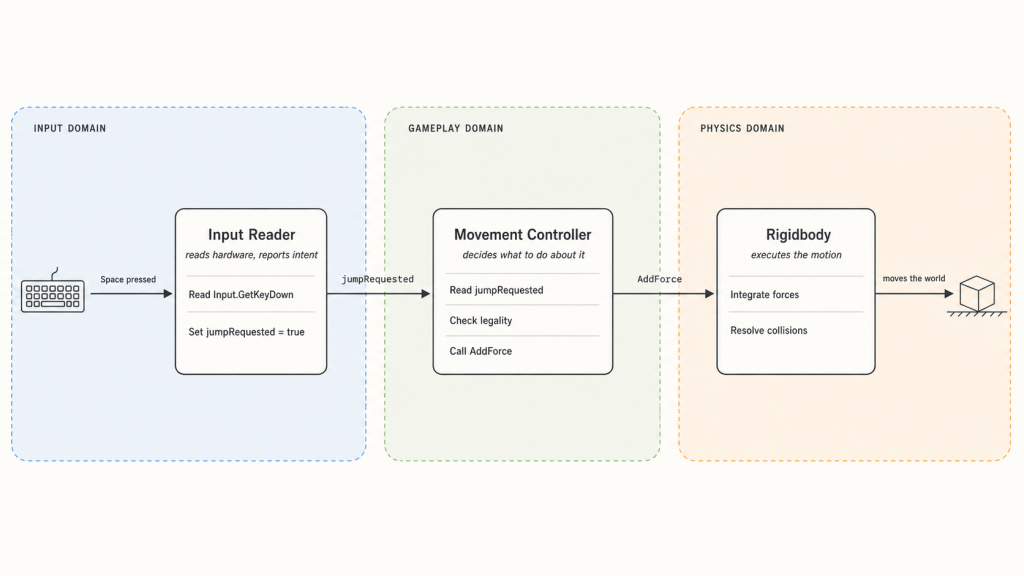

The fix is conceptually simple: don’t let the input handler decide what happens. Let it just report what the player did, and put the decision-making somewhere else.

Here’s a first attempt:

public class Player : MonoBehaviour

{

private Rigidbody body;

private bool isGrounded = true;

private bool jumpRequested;

void Awake() => body = GetComponent<Rigidbody>();

// Input side: just reports what was pressed.

void Update()

{

if (Input.GetKeyDown(KeyCode.Space))

jumpRequested = true;

}

// Gameplay side: decides what to do about it.

void FixedUpdate()

{

if (jumpRequested)

{

if (isGrounded)

{

body.AddForce(Vector3.up * 5f, ForceMode.VelocityChange);

isGrounded = false;

}

jumpRequested = false;

}

}

void OnCollisionEnter(Collision _) => isGrounded = true;

}It’s still one script, but the two halves are now visibly doing different jobs. Update() reads the keyboard and writes a flag — that’s the upstream half, ending exactly at “the player wants to jump.” FixedUpdate() reads the flag, checks whether the action is currently legal, and — only if it is — applies the actual motion through the Rigidbody. That’s the downstream half. Walls, gravity, and friction all get a vote now, because we’re going through Unity’s physics engine instead of around it. Input proposes; gameplay disposes; physics executes.

A disclaimer before this becomes a habit: cramming the input half into Update() and the gameplay half into FixedUpdate() is a teaching shortcut, not what production code looks like. Treat the code in this post as illustrations of the principle, not templates to copy.

This is a real improvement. But it’s also a tutorial-sized example — one action, one state — and tutorial examples have a way of looking fine until you give them more to do.

Let’s give it more to do. Suppose the character also needs to crouch and attack:

// Input side

void Update()

{

if (Input.GetKeyDown(KeyCode.Space)) jumpRequested = true;

if (Input.GetKeyDown(KeyCode.LeftControl)) crouchRequested = true;

if (Input.GetKeyDown(KeyCode.Mouse0)) attackRequested = true;

}

// Gameplay side

void FixedUpdate()

{

if (jumpRequested)

{

if (isGrounded && !isCrouching && !isAttacking)

{

body.AddForce(Vector3.up * 5f, ForceMode.VelocityChange);

isGrounded = false;

}

jumpRequested = false;

}

if (crouchRequested)

{

if (isGrounded && !isAttacking)

isCrouching = true;

crouchRequested = false;

}

if (attackRequested)

{

if (!isAttacking && !isStunned)

{

// play attack animation, queue damage, etc.

isAttacking = true;

}

attackRequested = false;

}

}You haven’t built anything ambitious yet — three actions, a handful of flags — and already the gameplay-side code is turning into a wall of conditional checks. Each new behavior adds a request flag, a status flag, and a small thicket of “make sure none of the other things are happening” negations. Imagine this script with sprinting, blocking, climbing, swimming, and dialogue interactions. Imagine the bug reports.

Something is wrong here. Not catastrophically wrong — the code still works, and it’s already meaningfully better than what we started with — but there’s a smell drifting up off the gameplay side that we haven’t named yet.

The state machine you didn’t write

Look at that gameplay-side code again. Every if is checking a different combination of isGrounded, isCrouching, isAttacking, isStunned. Every block is trying to reconstruct, in its own way, the same question: “what is the character actually doing right now?”

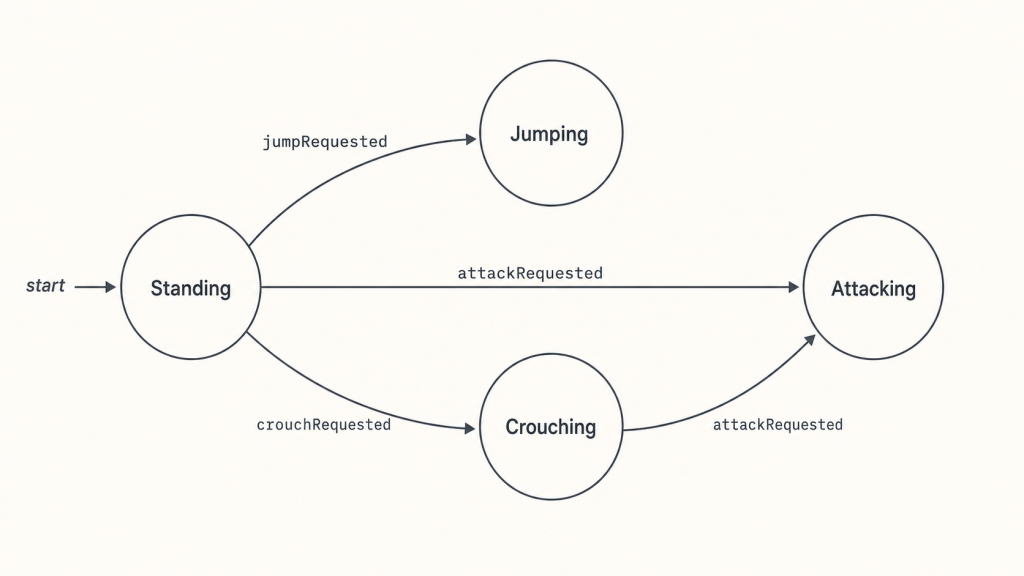

The character clearly has modes. Standing, jumping, crouching, attacking — those are all modes, and programmers call them states. A system that tracks which state we’re in and which transitions between states are allowed is called a state machine. Our character already has one. It just lives nowhere. There’s no place in the code where you can read the list of states, or the rules for moving between them. The state machine is there, but it’s implicit, smeared across a tangle of flags.

This is a common, painful trap, and it deserves more space than I want to give it here — so I’ve written about it separately. For this post, just one consequence is enough to feel the danger: with four booleans, you can express sixteen combinations, but only a handful of those — standing, crouching, jumping, attacking — actually make sense. The rest are nonsense the language will happily let you reach. The only thing keeping the character out of those combinations is your personal vigilance about every if condition you write. Sooner or later you forget, and a player T-poses mid-air because two if blocks disagreed about what was going on.

The fix, in one sentence: stop expressing the character’s mode through a pile of independent flags, and start expressing it as a single named thing — an enum, or a sealed hierarchy of state classes — that can only hold one value at a time. The compiler then enforces what your if conditions were failing to enforce by hand. The linked post goes deep on how and why.

Here’s what that looks like in our code. We replace the pile of booleans with a single named state:

public enum CharacterState

{

Standing,

Crouching,

Jumping,

Attacking,

Stunned,

}

private CharacterState state = CharacterState.Standing;

// Gameplay side, now state-driven instead of flag-driven:

void FixedUpdate()

{

if (jumpRequested)

{

if (state == CharacterState.Standing)

{

body.AddForce(Vector3.up * 5f, ForceMode.VelocityChange);

state = CharacterState.Jumping;

}

jumpRequested = false;

}

if (crouchRequested)

{

if (state == CharacterState.Standing)

state = CharacterState.Crouching;

crouchRequested = false;

}

if (attackRequested)

{

if (state == CharacterState.Standing || state == CharacterState.Crouching)

state = CharacterState.Attacking;

attackRequested = false;

}

}Drawn out, the state machine our code now describes looks like this:

A few small things changed in our code, and they’re all consequential. Each if now asks a single question — what state are we in? — instead of stacking several flag checks together. The list of valid states is visible at the top of the file, in the enum itself, instead of being scattered across whatever combinations of booleans your ifs have implicitly decided are legal. And the entire bug class of “two contradictory flags somehow both true” is gone, because the type system literally cannot express two states at the same time.

A caveat before we move on: this is a deliberately stripped-down state machine. The code is one-way — once the character enters Crouching, Jumping, or Attacking, nothing brings them back to Standing, because wiring the return transitions is mechanical and would just bury the principle under more code. Real production FSMs are richer in other ways too: entry and exit actions per state, hierarchical sub-states, side effects on transitions, and the full transition graph that would actually exercise states like the Stunned we declared but never used. The principle doesn’t depend on how rich the FSM gets — what matters is that the states and transitions live somewhere a reader can find them, and the language enforces that you’re only ever in one of them at a time.

That’s the gameplay half on solid ground — a single named state, transitions visible at a glance, the type system enforcing what our flags couldn’t. But we promised at the start of the post that we’d build both halves of the seam properly, and so far we’ve only touched one. The input side has been waiting the whole time, doing exactly what it did on day one. Time to turn around.

The other half

Take another look at our cleaned-up code — but this time look at the input side. We never touched it. The gameplay side got a state machine, an enum, a clean if per request. The input side is still doing exactly what it was on day one:

void Update()

{

if (Input.GetKeyDown(KeyCode.Space)) jumpRequested = true;

if (Input.GetKeyDown(KeyCode.LeftControl)) crouchRequested = true;

if (Input.GetKeyDown(KeyCode.Mouse0)) attackRequested = true;

}Look at any one of those lines. “If the space key went down, the player wants to jump.” That single line is, in miniature, a complete input system — it takes a hardware event on the left and produces a gameplay intent on the right. That translation, in fact, is what an input system does; it’s the entire job description. There’s nothing wrong with doing it.

What’s wrong is that the entire system is that one line, and there’s no room in it for anything else.

Suppose you want to let the player rebind keys. The mapping “Space = jump” needs to live somewhere editable — somewhere a settings menu can poke at, somewhere a player can change without recompiling. In our code it doesn’t live anywhere; it’s literally hardcoded into Update(). To rebind, you’d have to rewrite the script. So the input system needs a binding table that’s data, not code — something stored, queryable, swappable at runtime.

Suppose you want a gamepad button to also trigger jump. The input system needs to accept that multiple hardware events can produce the same intent. In our code, the only way is to chain ||s into the existing line, and from there every binding that supports multiple devices grows into a tangle. So the input system needs a many-to-one mapping — multiple hardware sources allowed to feed the same gameplay intent, without anyone downstream knowing or caring how many.

Suppose you want jump to fire only after the player holds the key for half a second. Or after a double-tap. Or only when Shift is held down at the same time. These are all forms of recognition — looking at a stream of raw hardware events and deciding, based on timing and pattern, what intent they add up to. Each one is its own small state machine that has to live somewhere. Our one-line system has nowhere to put any of them. So the input system needs room for recognition logic — its own little machinery between hardware events arriving and intents going out.

Each of these is a real feature any non-trivial game eventually needs — and none of them have anywhere to live in code that is, structurally, one line per binding.

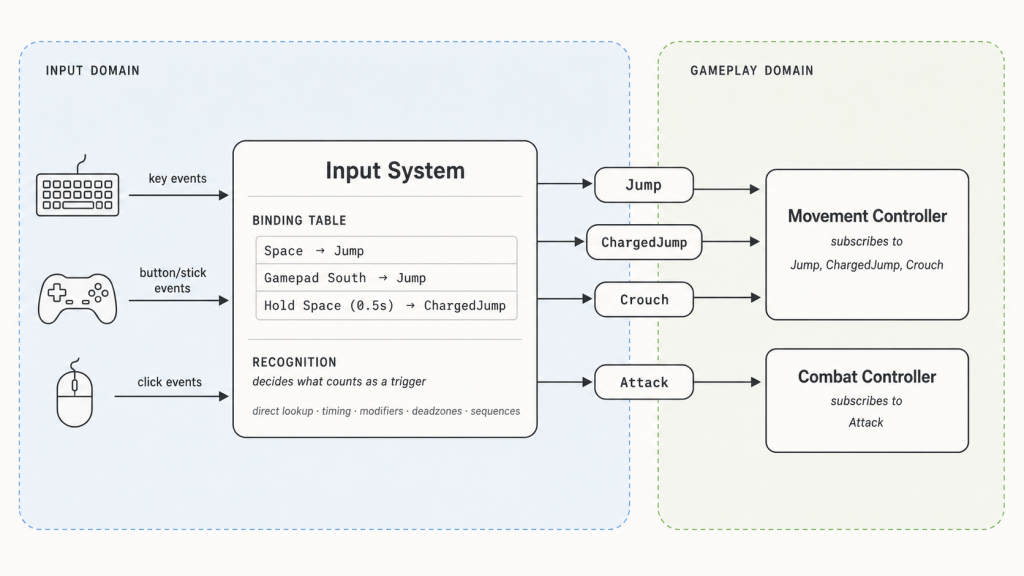

So here’s the brief. A real input system needs three things our snippet doesn’t have:

- bindings as data, so they can be stored, queried, and rebound at runtime;

- many-to-one mappings, so multiple hardware sources can feed the same intent;

- room for recognition, so it can interpret patterns over time, not just instantaneous events.

That’s the spec. The next section starts to give it shape.

A vocabulary

Building a real input system is a book’s worth of code — recognition pipelines, device abstraction, frame-accurate timing, the lot. That’s not what this post is. What we can do — and what’s actually more useful at this stage — is name the two ideas the architecture is built around, define their concerns clearly, and walk through a concrete example of how they fit together. From here on, the post is architecture rather than implementation: diagrams, walkthroughs, and a vocabulary you can take into any engine.

Two terms do most of the work. Both are standard across the industry, so once you have them you’ll recognize them everywhere.

Action. An action is a named, abstract intent — Jump, Crouch, OpenInventory. It’s what the gameplay code reads, and it’s all the gameplay code ever reads. The Movement Controller doesn’t ask “is space down?” It asks “did the player want to jump?” The action is the answer.

The point of an action is that it’s stable. Its name and meaning don’t change when the player rebinds a key, switches to a gamepad, or plays on a phone. Whatever happens at the hardware end of the system, the gameplay end always sees the same vocabulary: Jump, Crouch, OpenInventory. The hardware end is free to evolve; the gameplay end never has to notice.

Binding. A binding is a rule that wires hardware events to an action. “Space triggers Jump.” “Gamepad button South triggers Jump.” “Hold Space for half a second triggers ChargedJump.” The binding is the only place in the system where keyboard keys, gamepad buttons, and timing windows are mentioned by name. Upstream of bindings, everything is raw hardware events. Downstream, everything is named actions.

A binding table is the system’s source of truth — a list of rules consulted at runtime, separate from any code that produces or consumes them. Change a row, and the same gameplay code responds to a different key. Add multiple rows targeting the same action, and many-to-one mapping comes for free: the action fires whenever any of its bindings is satisfied.

Recognition is the third concern — and unlike action and binding, it doesn’t sit in one tidy place. It’s the process that turns raw hardware input into a clean action: deciding what counts as a trigger. The simplest form of recognition is the binding-table lookup itself — Space goes down, the table says Space → Jump, the Jump action fires. That’s recognition at its most basic: match the event, emit the action. But the same machinery extends naturally outward. Should the action only fire after the key is held for half a second? Only when Shift is down at the same time? Only on a double-tap within 200ms? Only after an analog stick clears its deadzone? Only on a specific sequence of inputs? Each of those is a richer recognition rule on top of the same lookup, and each has to live somewhere in the input system. Where exactly is an architectural choice. Some systems attach recognizers to individual bindings as decorators; others run a dedicated processing layer between hardware and bindings; some games build their own state machines on top of the input system for genuinely complex input logic. What matters is that all of it happens before the action fires, so gameplay code only ever sees the final clean intent.

A concrete walkthrough makes the flow easier to feel. Suppose the binding table includes:

- Space → Jump

- Hold Space (0.5s) → ChargedJump

- Gamepad South → Jump

- Left Ctrl → Crouch

- Left Mouse → Attack

The player taps Space briefly. A binding matches; the Jump action is emitted. The Movement Controller, subscribed to Jump, runs its handler. It knows nothing about Space, nothing about timing — only that the player wanted to jump.

The player tries again, this time holding Space for a full second. The hold rule, interpreting the event stream somewhere inside the input system, eventually fires. The ChargedJump action is emitted; whichever subscriber handles charged jumps responds. Where the hold rule physically lives in the input system isn’t visible to gameplay code, and doesn’t need to be — by the time the action reaches the subscriber, the work is already done.

The player picks up a gamepad and presses South. A different binding row matches; the same Jump action fires. Many-to-one mapping comes for free.

The player rebinds Jump to Enter. One row in the table changes; nothing else does. Every subscriber to Jump keeps working without modification. The Combat Controller, listening for Attack, never even notices something changed.

That’s the system at this stage. The flow is strictly one-directional: hardware → input system → actions → consumers. Nothing travels backward. The input system doesn’t know what the actions do; the gameplay code doesn’t know how they got triggered. Each side has its own private vocabulary; the action is where they meet.

There’s still one piece missing — and it’s the most interesting one. So far the binding table is fixed: the same set of bindings is always active. But what an input means in a real game depends on what the player is doing. When a menu opens, pressing Space shouldn’t make the character jump in the world behind it — even though Space → Jump is still sitting in the table. Our current architecture has no way to tell the input system to stop honoring that binding while the menu is open. Capturing that distinction is going to require something we’ve been carefully avoiding: a small backward channel from the gameplay side to the input side. That’s where the next section turns.

The dangerous addition

There’s a reason we’ve been avoiding context until now. It’s the one piece that doesn’t fit cleanly into the architecture we’ve been building.

A note on the term first. In input-system parlance, context means which set of bindings is currently active. Most games need more than one. Space → Jump makes sense in the world; it doesn’t make sense in the pause menu. Escape → Close makes sense in a menu; it doesn’t make sense while running. Different contexts, different active bindings — and the game switches between them as the player moves through different modes.

Up to this point, the input system has been strictly upstream. Hardware events come in, actions go out, and nothing flows the other way. That one-directional flow is what made everything else clean: the input system can be developed, tested, and reasoned about without knowing anything about gameplay.

Context breaks that. To know which binding set is in force, the input system has to be told — and the only thing that knows is the gameplay code. The moment we let gameplay tell input which context to use, we’ve opened a backward channel.

And backward channels, once they exist, multiply. If we’re not deliberate about this, every gameplay component with a reason to mutate input state will do so. Within a few features the input system has a dozen callers reaching in from a dozen directions, and the separation of concerns we worked so hard for is ornamental — the boxes in the diagram still exist, but the arrows inside the code go everywhere.

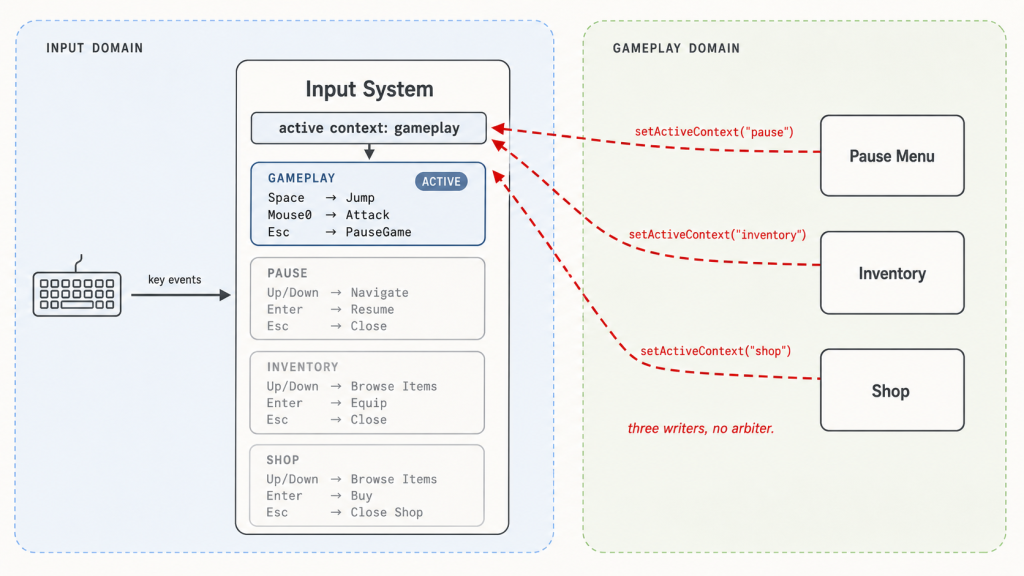

Here’s how that goes wrong.

The obvious first move is what every developer reaches for. When the pause menu opens, it calls inputSystem.setActiveContext("pause"). When it closes, it calls setActiveContext("gameplay"). Works perfectly. Ship it.

Then someone adds an inventory. Its open handler calls setActiveContext("inventory"). Its close handler calls setActiveContext("gameplay"). Still works.

Then someone adds a shop. Players can open it directly from a vendor in the world, or from a button inside the inventory. The shop’s open handler calls setActiveContext("shop"). The player closes the shop. What should the active context be now? If they opened it from the world, the answer is gameplay. If they opened it from inside the inventory, the answer is inventory. The shop’s close handler can only hardcode one — let’s say it picks gameplay, the more common entry point. Now any player who opened the shop from the inventory finds themselves dropped into gameplay with the inventory still on screen, and Space starts firing Jump again.

Multiple components believed they owned the context. The last one to write won. Same anti-pattern as the implicit state machine, hoisted one level up.

The fix is the same fix as before — and at this point in the post, you can probably guess what it is.

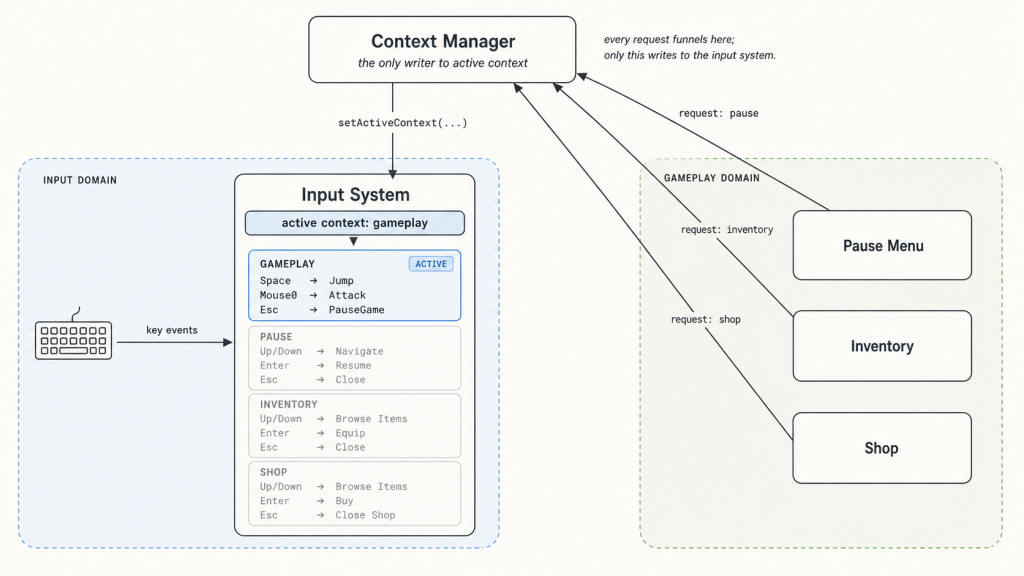

Whenever multiple parties want to mutate shared state, the answer is to name the state, give it a single owner, and route every mutation through that owner. We’ve done this twice already: once for the character’s locomotion state (replacing scattered booleans with a single enum), once for the input system’s binding logic (replacing scattered ifs with a single binding table). Now we do it a third time, for the game’s context.

The current context is real state. Real state needs a real home. So we introduce one: a context manager. It’s a separate component whose only job is to know what context is currently active, and to be the only thing in the entire system allowed to tell the input system about it. Consumers don’t talk to the input system anymore. They talk to the context manager — requesting a context change, signaling that a UI surface has opened, informing the manager when one has closed. The manager arbitrates, decides what the new active context should be, and forwards exactly one instruction to the input system: “the active context is now X.”

The shape of the manager itself is a separate question — and one worth thinking about, because the right shape depends on the game. Some context flows are stacks (inventory opens over gameplay, shop opens over inventory; closing pops one layer at a time). Some are replacements (entering a cutscene replaces gameplay; ending it restores). Some are hierarchies (UI contexts share a “back” binding while differing in everything else). Each is a different internal structure behind the same outward-facing component, and we’ll come back to them in the further-reading section. The architectural point we need here is just that the manager exists, that exactly one of them exists, and that it is the only thing the input system listens to.

For the third time in this post, the same pattern has surfaced — state without an owner, mutated by parties with no rule for arbitration, producing bugs that look intermittent but are structurally inevitable. And for the third time, the fix was the same: name the state, name the owner, route the mutations. The locomotion enum did it for the character. The binding table did it for the input system. The context manager does it for the game’s mode.

There’s a deeper reason all three of these fixes worked. That’s the next section.

Easier than the wrong thing

The right thing is always possible. You can write disciplined code, remember every invariant, document every assumption, and produce something correct in any system, no matter how badly designed. So the test of an architecture isn’t whether the right thing is possible in it. The test is whether the right thing is easier than the wrong thing at the moment a tired developer reaches for the keyboard at 4pm on a Friday.

Look at the three fixes we worked through.

When we replaced scattered booleans with an enum, we didn’t make it impossible to misrepresent the character’s mode — you could always pile up flags somewhere else. We made the right thing easier. Adding a new state means typing a name in one place. The wrong thing — scattering the mode across half a dozen booleans — now requires actively swimming against the structure of the code.

When we built a binding table, we didn’t make it impossible to hardcode keys into gameplay scripts — anyone could still write if (Input.GetKeyDown(...)) in a movement controller. We made the right thing easier. Adding a binding is editing one row. The wrong thing — sprawling input checks across consumer code — now requires deliberately bypassing a system that’s right there asking to be used.

When we introduced a context manager, we didn’t make it impossible for components to call into the input system directly. We made the right thing easier — every component talks to one named arbitrator. The wrong thing — components calling into the input system directly — now requires going around the arbitrator whose entire job is to prevent exactly that.

In every case, the architecture didn’t enforce correctness. It made correctness the path of least resistance. Every diagnosis in this post was really the same diagnosis:

The wrong thing was easier than the right thing.

And every fix was really the same fix: change the architecture until that stopped being true.

Once you can see it, the test generalizes to design questions this post never touched. Is the right thing easier than the wrong thing in this system? Ask it, and most of the answers fall out.

That’s the whole argument. Input, action, context — three layers, one principle. Build the system that makes the right thing the easy thing. Everything else follows.

Further reading

Input system design is genuinely book-sized, and what we just walked through is one thread through it — roughly in the order I’d want a beginner to encounter the ideas. What follows is a tour of several things we skipped along the way: useful refinements worth knowing, and a few open questions worth chewing on.

A brief tour of Unity’s Input System

If you’re working in Unity, the package called Input System (the modern replacement for the legacy Input.GetKey... API) maps onto the architecture this post described almost one-for-one. The vocabulary is slightly different in places, but the underlying ideas are the same.

- Actions. Same name, same meaning. Named, abstract intents —

Jump,Move,OpenInventory. Your code subscribes to these. - Bindings. Same name, same meaning. Rules that wire hardware controls to actions. A single action can have many bindings — keyboard, gamepad, touchscreen — and Unity treats this as the default case, not the special one.

- Action Maps. This is what Unity calls contexts. An Action Map is a named collection of actions enabled or disabled together — typically

Gameplay,UI,Cutscene, and so on. You switch contexts by enabling one map and disabling the others. This is exactly the role the context manager arbitrates over. - Processors. Value transformations. Things like inverting a stick axis, applying a deadzone, normalizing a vector, or scaling sensitivity. Processors operate on the value of an input — they reshape what comes through, but don’t change when the action fires.

- Interactions. Timing-and-pattern rules. Hold (action fires after the control has been held for some duration), Tap (action fires on a quick press-and-release), Multi-Tap (double-tap, triple-tap), Slow-Tap, and so on. Interactions are the recognition layer this post described — and Unity attaches them to bindings as decorators, which is one of the architectural choices we noted was possible but not mandatory.

It’s worth knowing that Unity did commit to “recognizers attached to bindings” as its architecture. That choice has tradeoffs: it makes simple recognition discoverable and easy to author, but complex sequence-based input — fighting-game motions, gesture recognizers, anything with deep history — usually ends up living somewhere outside the input system anyway. If you find yourself fighting Unity’s interactions for some scenario, that’s not a sign you’re using it wrong; it’s a sign your scenario is asking for a different shape, and that’s fine.

Lifecycles: started, performed, canceled

I described actions in this post as if they fire once when the rule is satisfied. In practice, every meaningful input has a lifecycle: it begins, it does something, and it ends. Unity formalizes this with three phases — Started, Performed, Canceled — and most input systems converge on something similar, because the alternative is creating a parallel action for every “begin” and “end” you care about, which gets out of hand fast.

- Started. The rule has begun matching. The button went down. The hold timer started. The double-tap candidate is in flight.

- Performed. The rule has been satisfied. The hold has been held long enough. The double-tap completed within the window. This is the moment that, in the simplified model from earlier in this post, “the action fires.”

- Canceled. The rule was started but didn’t complete. The button was released early. The hold timer ran out before being released. The double-tap missed its window.

These are useful even for simple actions. Started lets you trigger animations or audio the moment a press begins, before you know what it’ll become. Canceled lets you tear those down cleanly when the player changes their mind. Without lifecycles, you end up creating extra named actions — JumpButtonDown, JumpButtonUp, JumpHoldStarted, JumpHoldCanceled — and the action vocabulary doubles or triples for no real gain. With lifecycles, one Jump action emits state changes its subscribers can interpret as needed.

Hierarchical state machines

The state machine we drew for the character had four flat states: standing, jumping, crouching, attacking. That works for an example, but real characters quickly accumulate states with mostly-similar behavior. Imagine adding a swim mode: SwimIdle, SwimMoving, SwimAttacking, SwimDodging. Then a climb mode. Then a mounted mode. If each is a flat sibling of the original states, you end up duplicating most of your transition rules — on attack-pressed go to attacking; on swim-attack-pressed go to swim-attacking; on climb-attack-pressed go to climb-attacking — and the state machine sprawls.

A hierarchical state machine lets you nest one state machine inside another. The character has an outer state machine with OnFoot, Swimming, Climbing, Mounted. Inside each, an inner state machine — Idle, Moving, Attacking, Dodging — that’s largely shared in shape but different in detail. Transitions, animations, and rules can be inherited, overridden, or replaced at each layer.

The benefit shows up wherever two state machines are mostly the same with a few critical differences. Locomotion modes (foot/swim/climb) share the idle/moving/attacking shape but differ in physics. Combat stances (sword/bow/spell) share the ready/wind-up/strike/recover shape but differ in timing and reach. UI panels share the opening/open/closing/closed shape but differ in content. In each case, a hierarchy lets you describe the shared shape once and customize it where it matters.

The cost is conceptual overhead. Hierarchical state machines come with more vocabulary — entry actions, exit actions, history states, parallel regions — and they’re easier to over-design than to under-design. If you find yourself reaching for one, the test is whether the duplication you’d be eliminating is real, structural duplication, or just a few coincidentally-similar transitions you can write twice without anyone noticing.

Open questions to chew on

A few questions the post deliberately didn’t answer. They’re good ones to think through on your own — there are real answers, but the reasoning is what’s worth doing.

Holding a button has a visible state — a bar fills, a charge indicator pulses, a slingshot stretches. Who owns that visual? The input system knows about the timer; the gameplay code knows about the visual. So which side draws the bar? And whichever you pick, what does the other side need to expose so that side can do its job? The question is sharper than it looks — it forces you to decide what counts as “input” once you admit input has a visible duration, not just a moment.

Haptic feedback — vibration, rumble, force feedback. Where does it live in this architecture? It’s tempting to say “it’s just output, that’s not an input concern.” But haptics are usually a response to player input — the controller rumbles when you fire, when you take damage, when you hit a wall. So it lives downstream of actions, but it talks to the same hardware the input came from. Does the input system grow an “output” half? Does gameplay talk directly to the controller? Is there a third actor we haven’t named yet? Pick something and defend it.

Some inputs are events (a key going down). Others are streams (mouse delta, gamepad stick, gyroscope, accelerometer). The architecture in this post is built around discrete actions firing at moments — Jump, Attack, OpenInventory. A continuous stream of values doesn’t obviously fit. Does it become an action that fires constantly? An action that holds a current value the gameplay code polls instead of subscribing to? Something else entirely? The mental model has to bend somewhere — figuring out where it bends, and what that costs, is one of the more useful exercises for understanding what this architecture actually buys you. (A hint: Unity has something called ActionType , you may want to look it up)

Input buffering. Fighting games and Soulslikes accept inputs slightly before they’re allowed — pressing attack 100ms before your recovery animation ends still counts. Where does that buffer live? Is it a property of the action itself, where the action remembers it was started but not consumed? A separate buffering layer between the input system and gameplay? Something the gameplay code does itself, per-character? Each has a different blast radius, and a different answer to who decides how forgiving the game feels.

Action consumption and priority. A pause menu and the world both subscribe to Cancel. The player presses Escape. Both fire — but only one should respond. (Or should both? Or neither, until something arbitrates?) Are actions consumable? Does the first subscriber win? The last? Should subscribers declare a priority? The naïve “everyone subscribes; everyone fires” model breaks the moment two subscribers disagree about what should happen — and the answer interacts in interesting ways with the context manager.

Recording and replay. Sooner or later most games need to record player input and play it back — for replays, esports clips, ghost runs, AI training, deterministic bug repro. What gets recorded? You can capture raw hardware events (faithful, but coupled to the device — change a binding and the replay breaks) or actions (portable across rebinds, but you’ve lost the recognition timing and the device-specific texture of how the player got there). Both are defensible; the architecture has to commit somewhere. Where in the pipeline does the recorder tap in, and what’s the cost of the choice?